AquaTriV Dataset

AquaTriV: An Underwater Multi-Scene, Multi-Modal Dense SLAM Dataset with Active, Passive, Neuromorphic Vision for Localization and Mapping Evaluation

25 sequences · 255GB · 6.9h · 2.8km

25 sequences · 255GB · 6.9h · 2.8km

Passive, active, and neuromorphic vision with IMU, DVL, and pressure sensors for robust perception

High-fidelity dense point clouds

acquired by a 3D laser scanner

for mapping evaluation

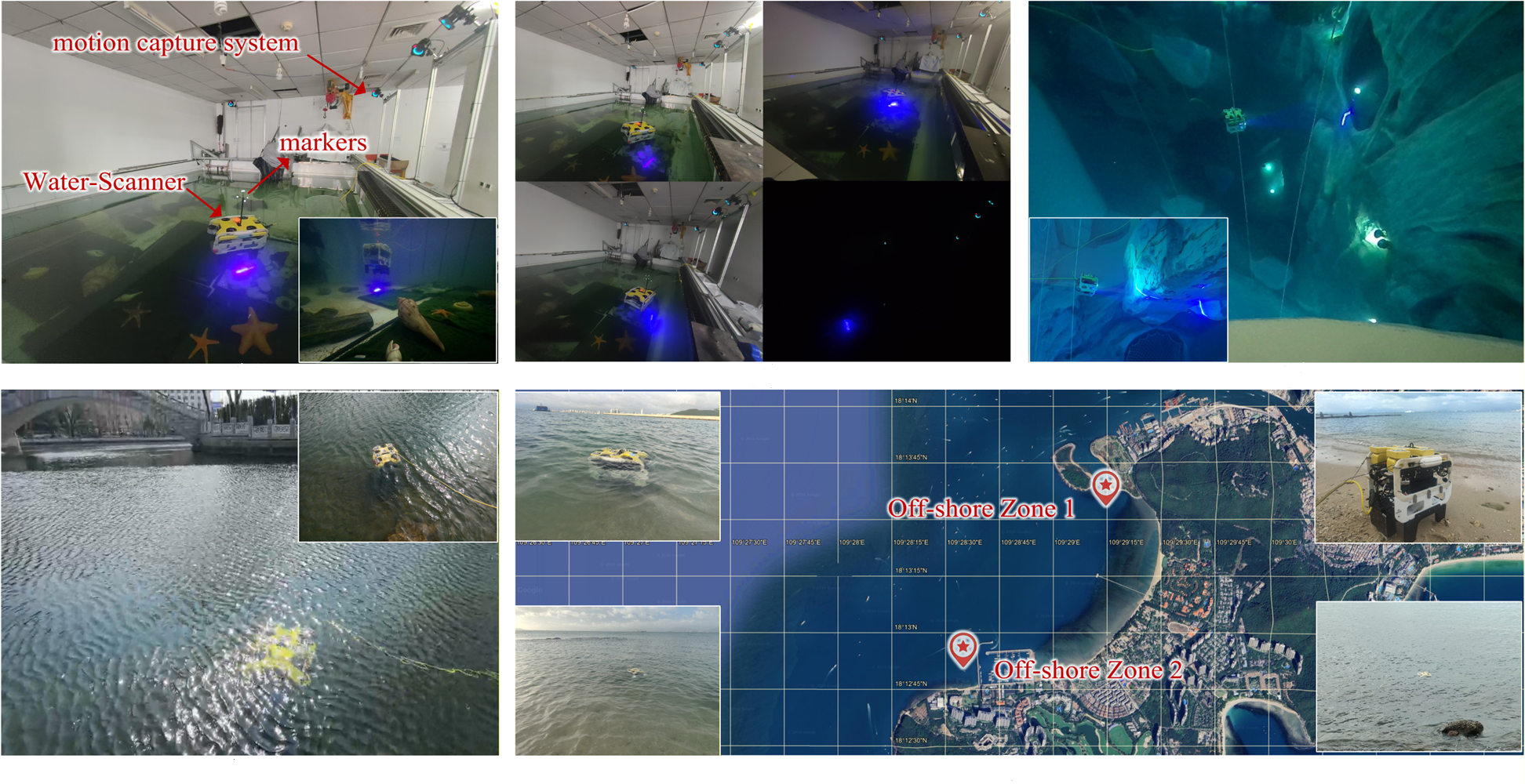

Motion capture system (indoor) and INS-SfM fusion (outdoor) for localization evaluation

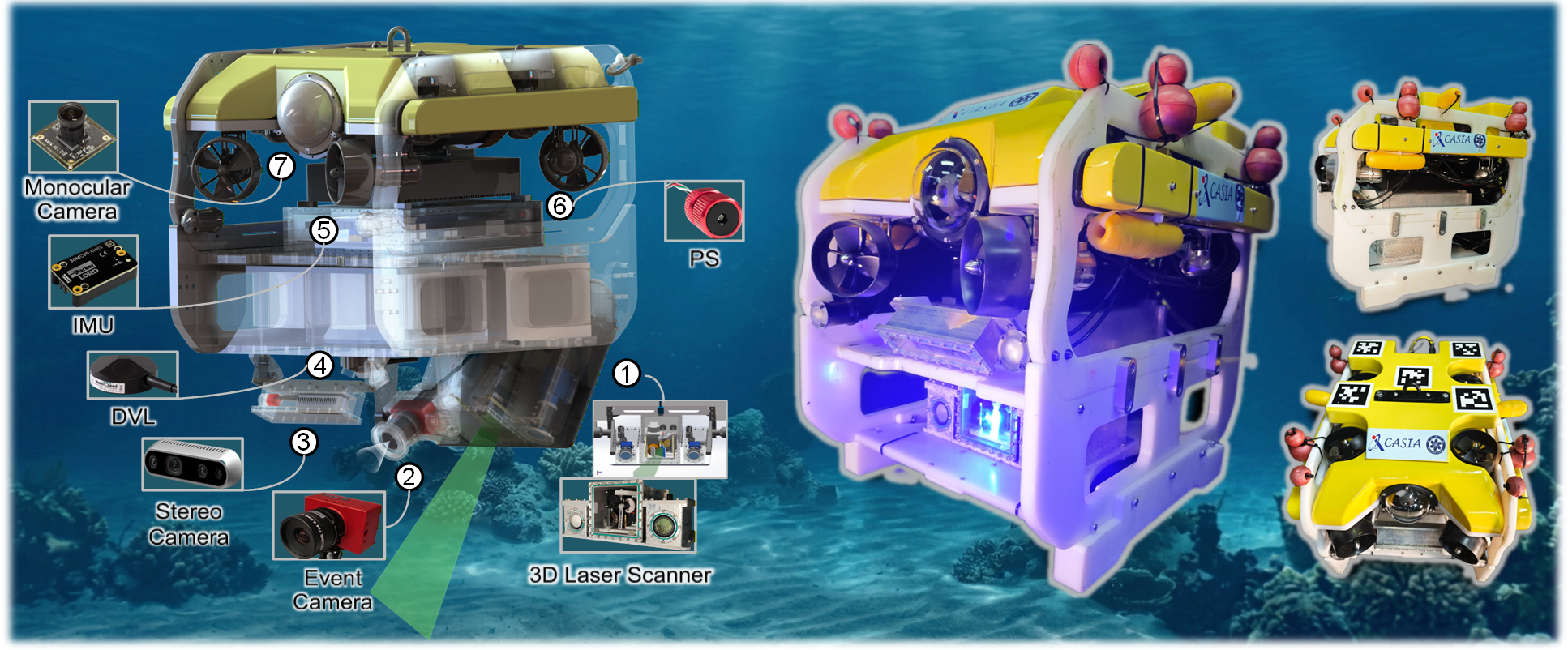

Model: FindROV H20

Weight: 23 kg

Max Depth: 300 m

Velocity: 2 knot

Cable: 100 m

Model: 3DM-CV5-25

Freq: 100 Hz

R/P: ±0.5°

Yaw: ±1°

Mag: ±2.5 Gauss

Model: A50

Beam: 4-beam

Angle: 22.5°

Freq: 4–26 Hz

Res: 0.1 mm/s

Model: B30

Range: 0–30 bar

Depth: 300 m

Acc: ±200 mbar

Res: 0.2 mbar

View: Front

USB RGB

Res: 1920×1080

Freq: 30 Hz

FoV: 80°×64°

View: Down

Model: D435i

Res: 640×480

Freq: 30 Hz

FoV: 87°×58°

Model: DAVIS346

Resolution: 346 × 260

Temporal: 1 μs

Latency: < 1 ms

Dynamic Range: 120 dB

Freq: 70 Hz

Points: 512

λ: 450 nm

Power: 3 W

Angle: 35°

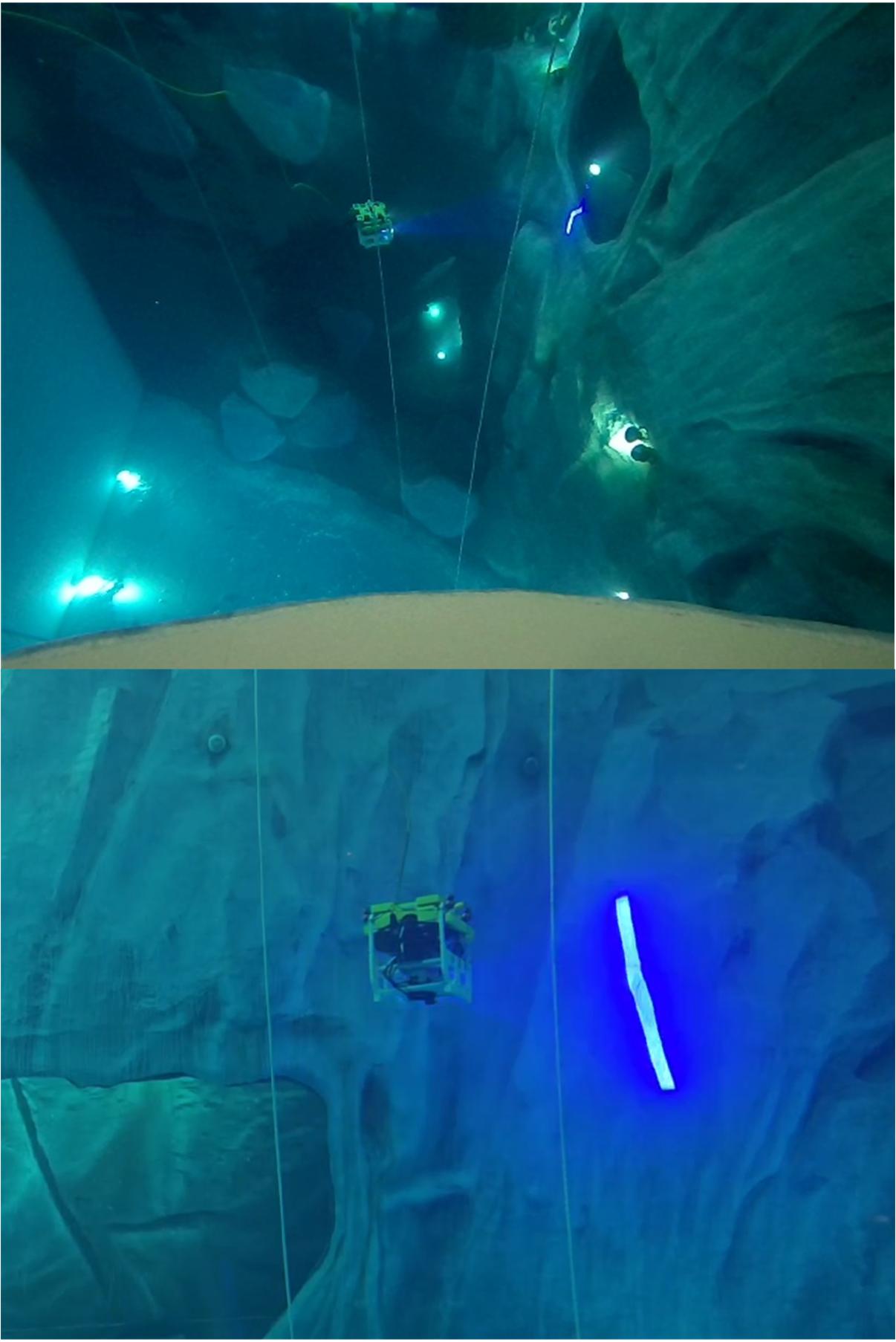

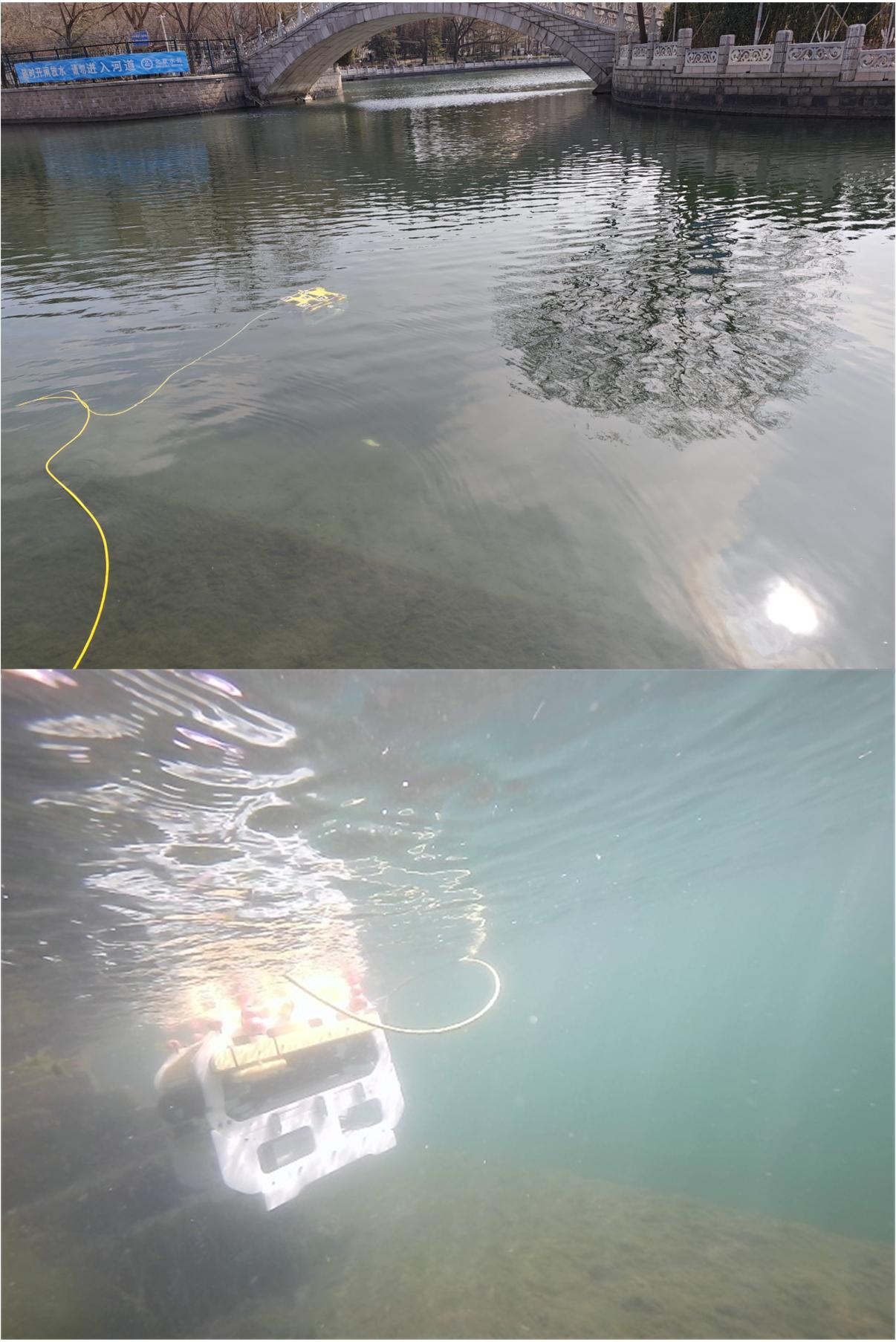

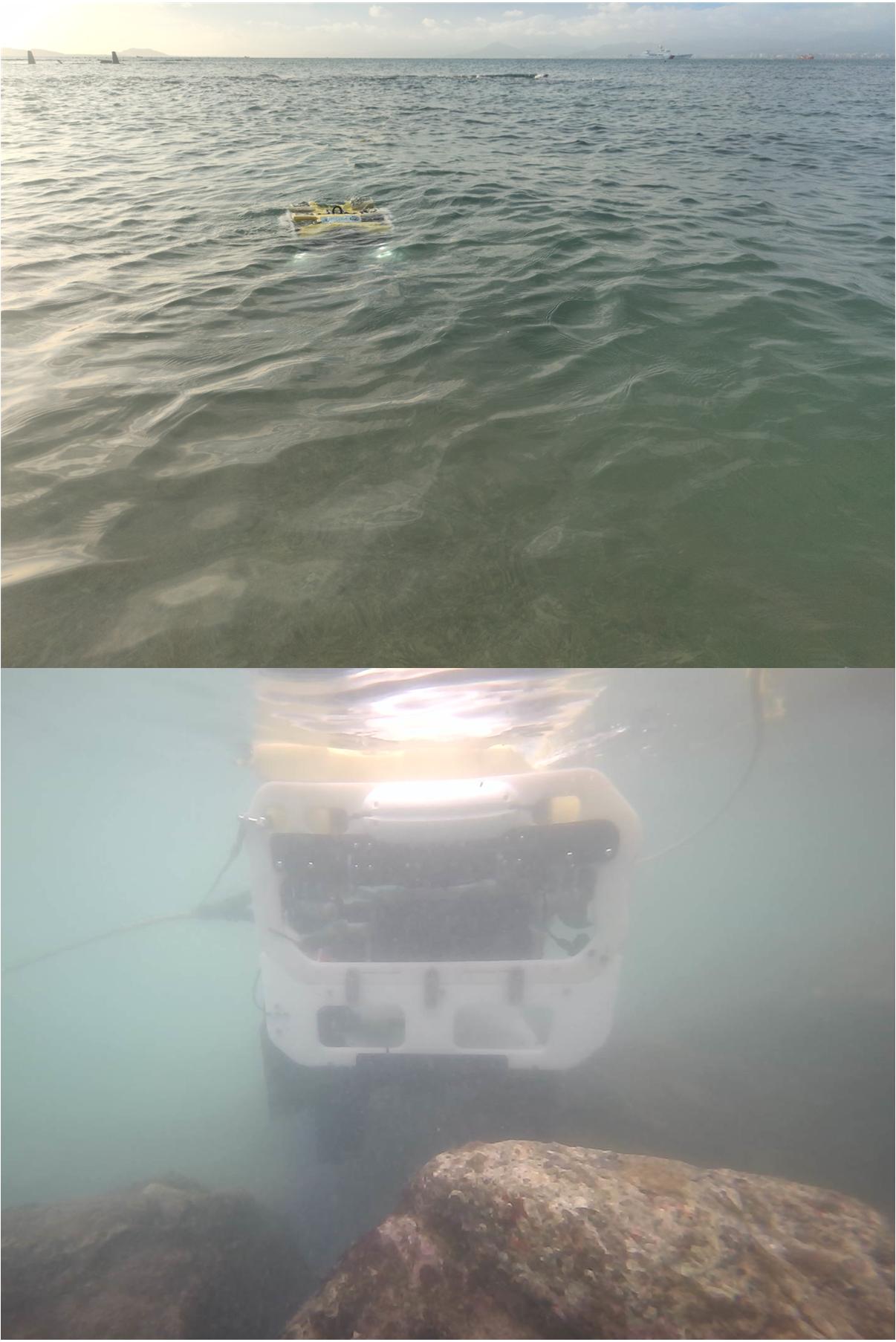

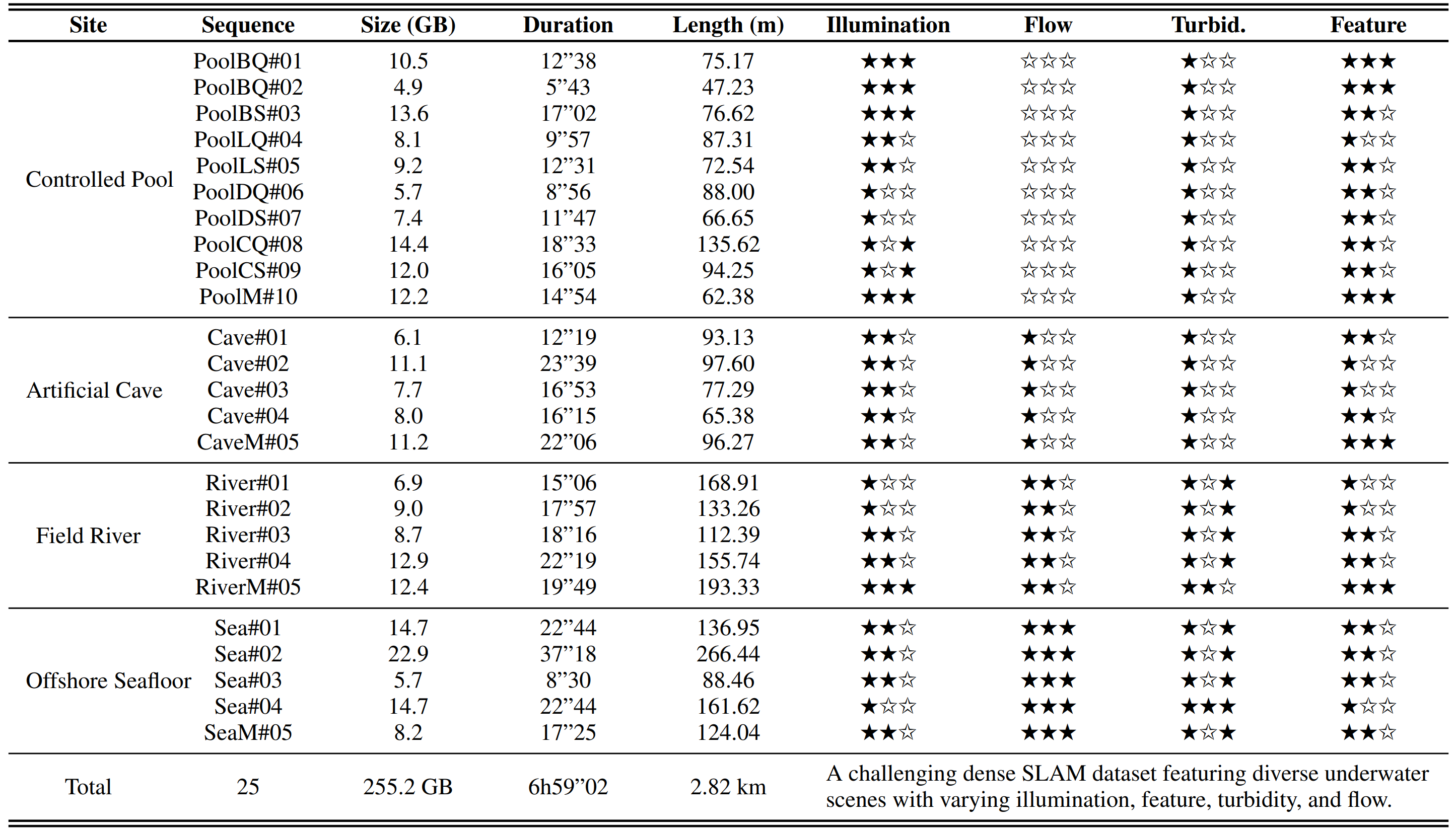

Scene-wise characteristics in the AquaTriV dataset.

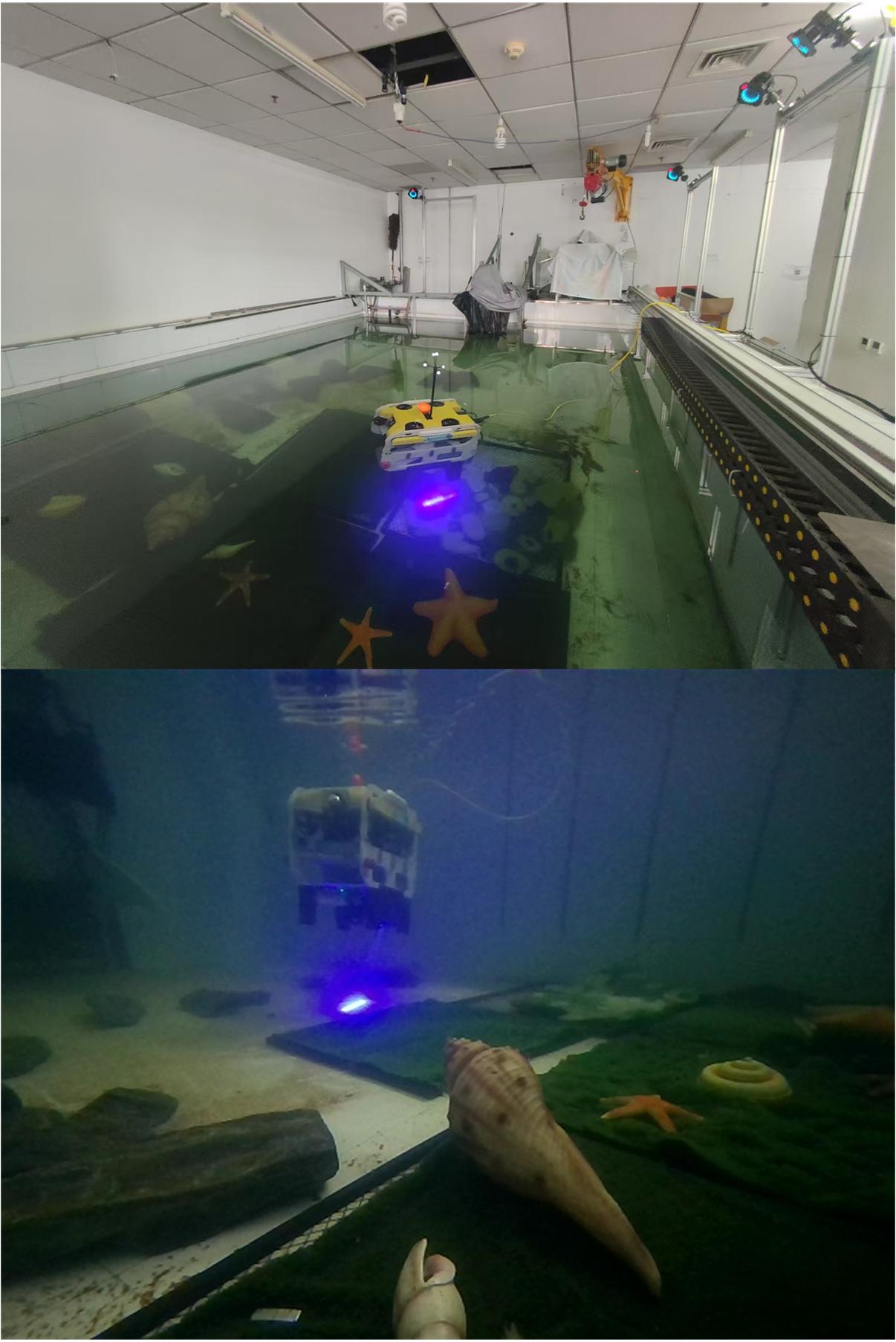

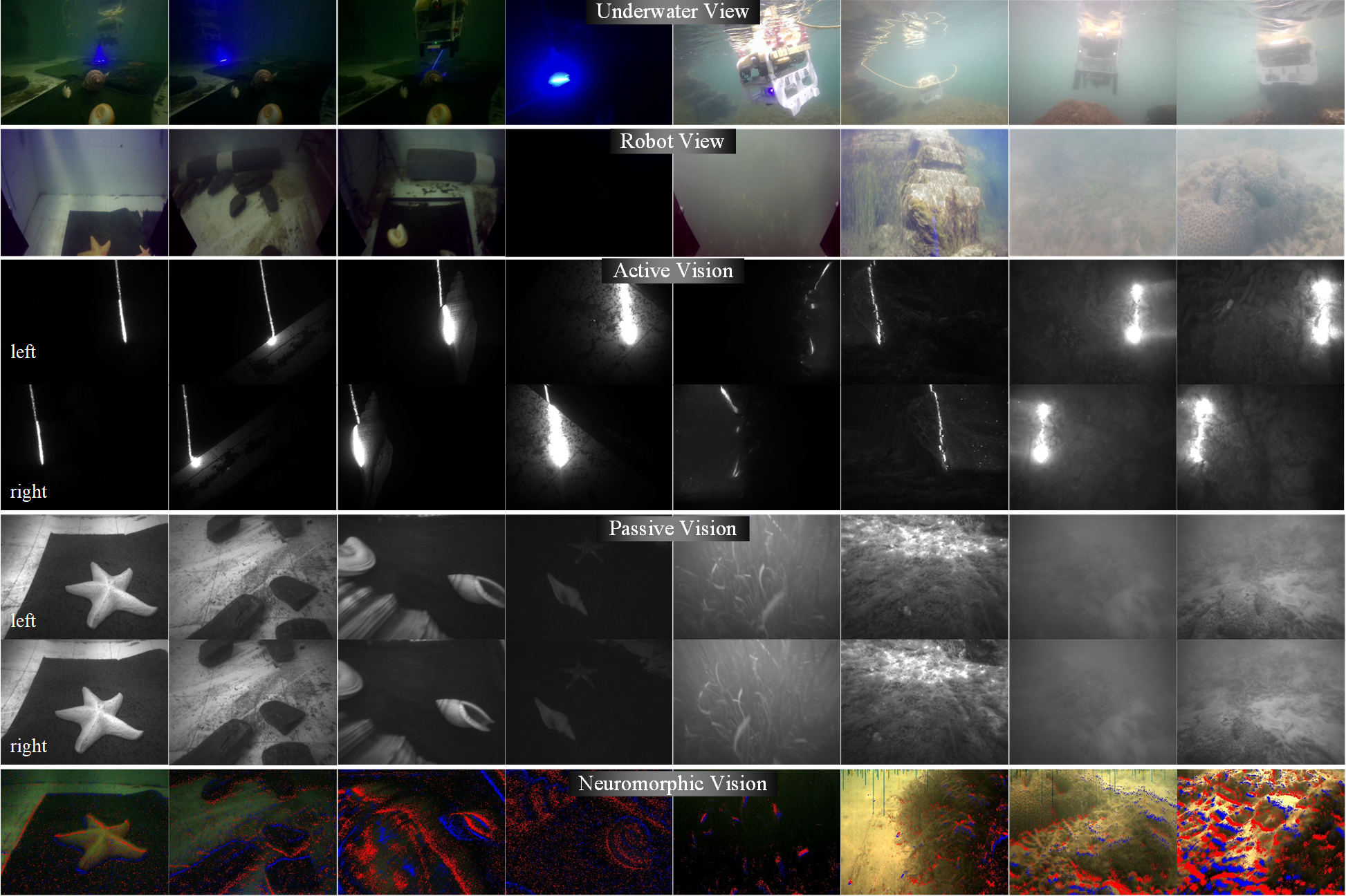

We provide multi-modal raw sensory data including active vision, passive vision, and neuromorphic vision streams, along with synchronized navigation measurements. Below shows representative visual samples and ROS topic formats.

Examples of visual raw images in the AquaTriV dataset.

| Type | Topic Name | Message Type | Rate (Hz) |

|---|---|---|---|

| Active Vision | /scanner/left_image/compressed | sensor_msgs/CompressedImage | 100 |

| /scanner/right_image/compressed | sensor_msgs/CompressedImage | 100 | |

| Passive Vision | /stereo/infra1/compressed | sensor_msgs/CompressedImage | 30 |

| /stereo/infra2/compressed | sensor_msgs/CompressedImage | 30 | |

| /stereo/monocular/compressed | sensor_msgs/CompressedImage | 30 | |

| /stereo/imu | sensor_msgs/IMU | 200 | |

| Neuromorphic Vision | /dvs/events | dvs_msgs/EventArray | 30 |

| /dvs/image_raw/compressed | sensor_msgs/CompressedImage | 30 | |

| /dvs/event_rendering/compressed | sensor_msgs/CompressedImage | 30 | |

| /dvs/imu | sensor_msgs/IMU | 200 | |

| AHRS | /imu/data | sensor_msgs/Imu | 200 |

| /imu/mag | sensor_msgs/MagneticField | 50 | |

| DVL | /dvl/data | dvl_a50_2/DVL | 12 |

| /dvl/velocity | sensor_msgs/Imu | 12 | |

| /dvl/position | sensor_msgs/Imu | 4 | |

| Pressure | /pressure_sensor/depth | sensor_msgs/FluidPressure | 60 |

| Bonus | /robotview/monocular | sensor_msgs/CompressedImage | 30 |

| /waterview/monocular | sensor_msgs/CompressedImage | 30 |

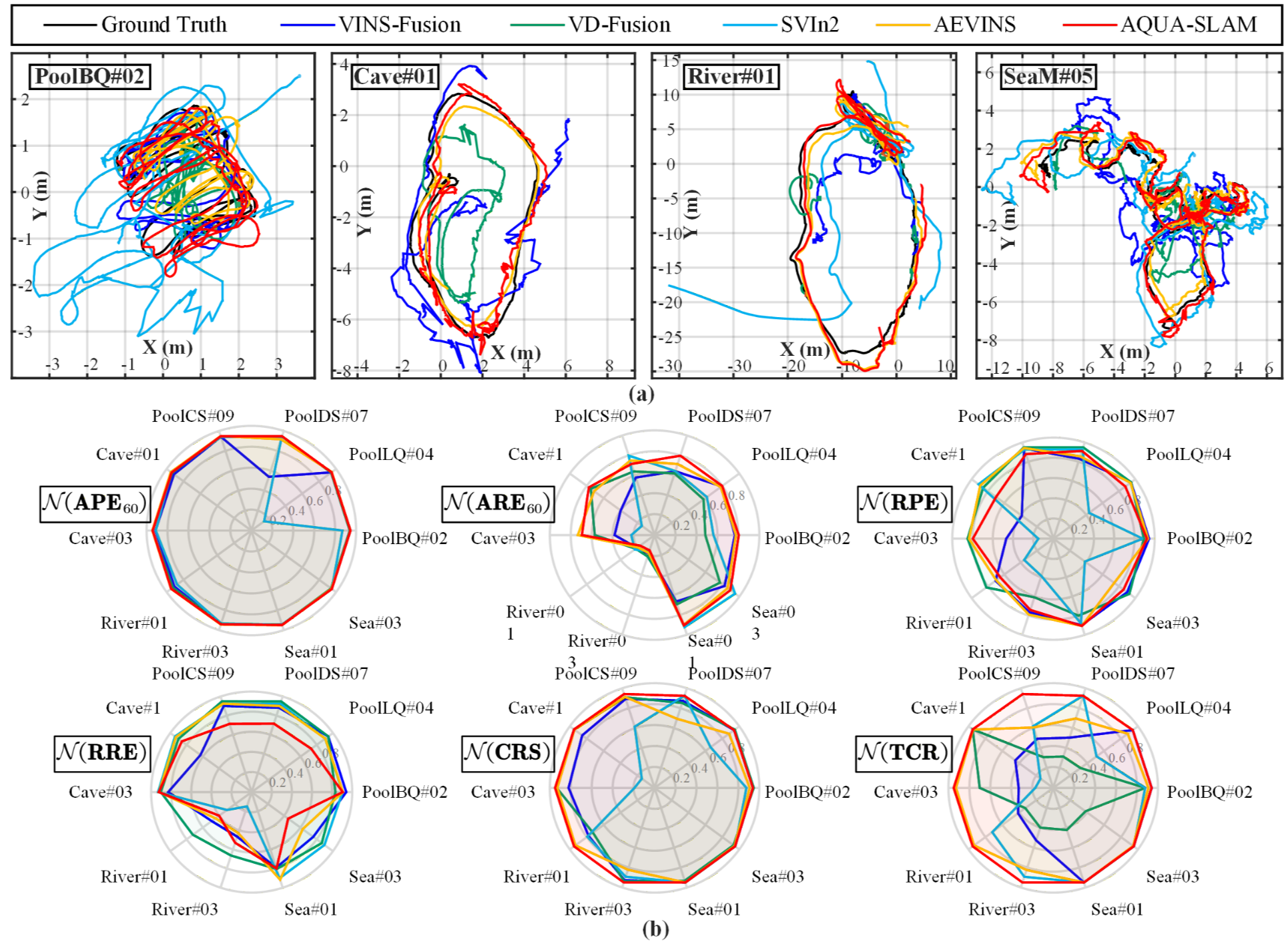

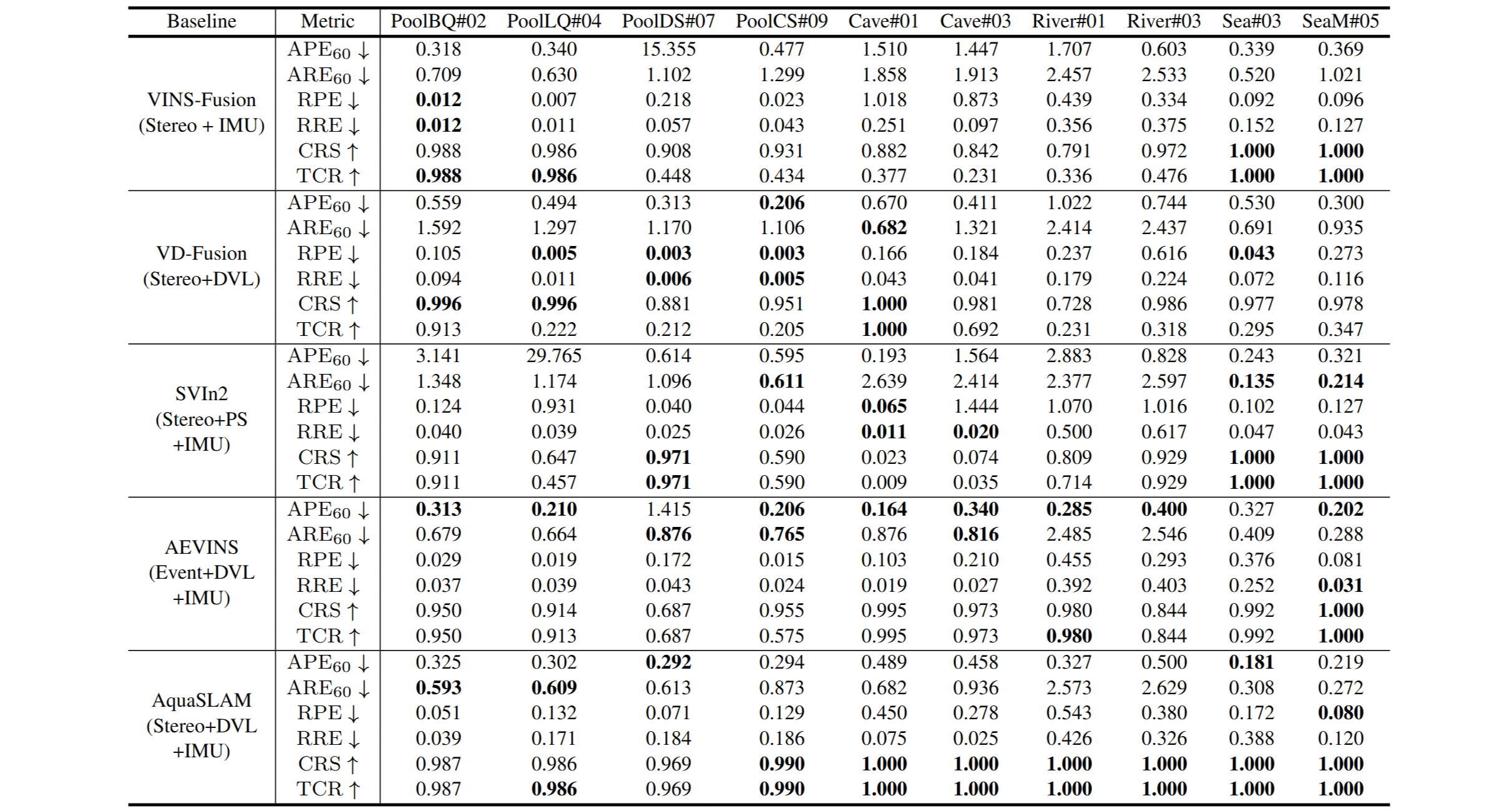

We evaluate classical and multi-modal visual-inertial SLAM systems including VINS-Fusion, SVIn2, VD-Fusion, AEVINS and AquaSLAM. These methods represent state-of-the-art pipelines combining stereo vision, IMU, DVL, and event cameras.

Five baseline method localization evaluation results in the AquaTriV dataset.

Metrics include APE, ARE, RPE, RRE for accuracy, and CRS/TCR for robustness and tracking continuity.

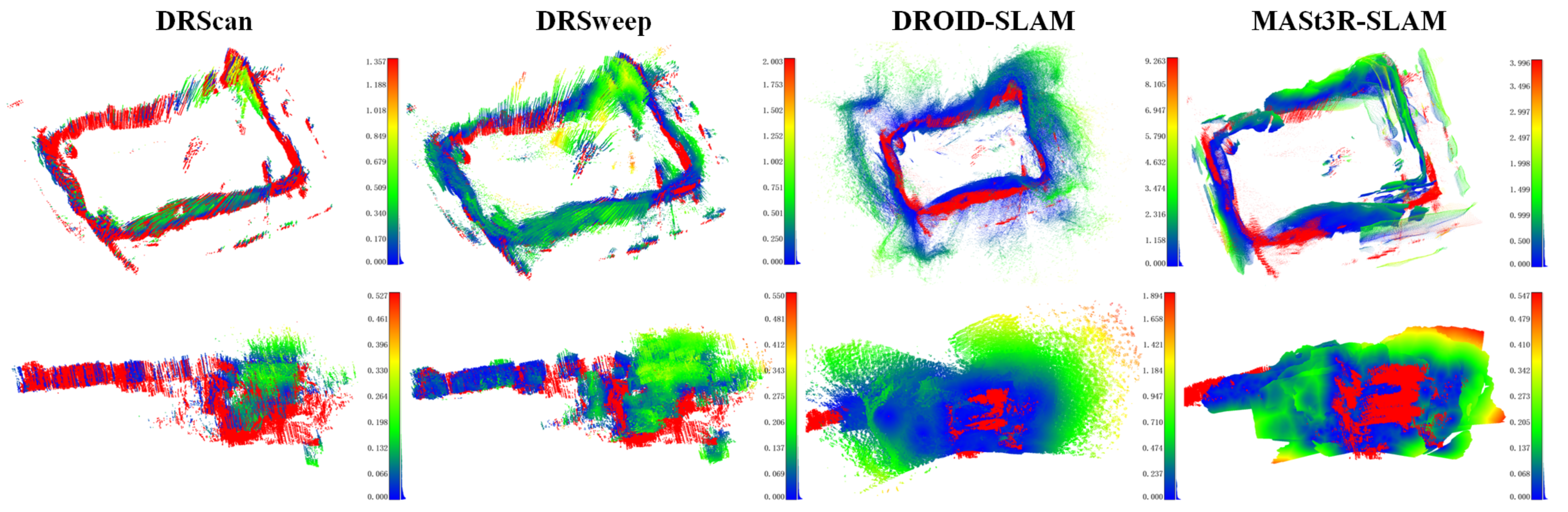

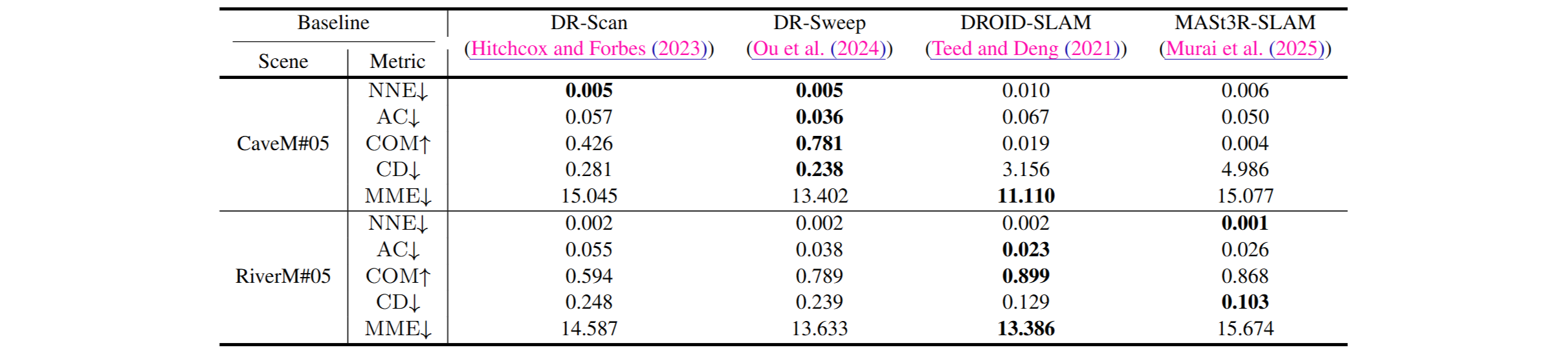

We compare representative dense mapping and neural reconstruction baselines, including DR-Scan, DR-Sweep, DROID-SLAM, and MASt3R-SLAM, covering both geometric and learning-based approaches.

Four baseline method dense mapping evaluation results in the AquaTriV dataset.

Evaluation metrics include nearest neighbor error (NNE), completeness (COM), accuracy (AC), Chamfer distance (CD), and mesh metric error (MME).

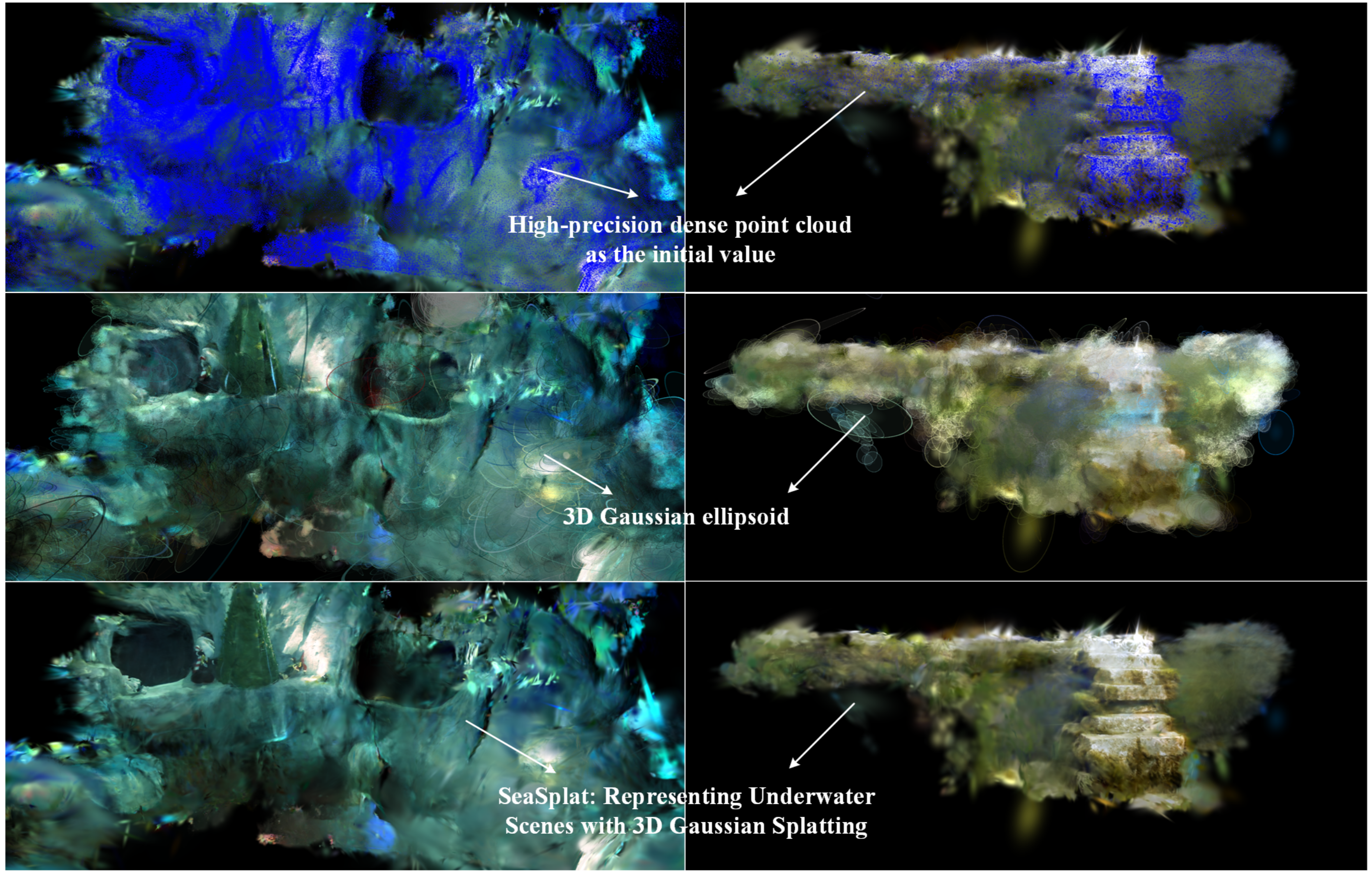

Recent neural rendering approaches, particularly 3D Gaussian Splatting (3DGS), require stable and sufficiently dense geometric priors, which are often lacking in underwater datasets relying on sparse SfM-based reconstructions. By deploying SeaSplat on AquaTriV and leveraging dense laser-scanned point clouds for initialization, we achieve stable optimization and high-fidelity photo-realistic reconstruction across diverse underwater scenes.